Tips for Designing a Comprehensive Training Evaluation Strategy

How do we know that training courses are working?

How do L&D teams prove to their executive sponsors and other key stakeholders that training programs—and the sometimes hundreds of thousands of dollars used to produce it—are fostering actual improvements in employee performance, and helping an organization achieve its mission-critical business objectives? How do organizations gauge the effectiveness and impact of their development initiatives? How do we know that training courses are working? If companies use a training evaluation strategy, they will know what is working and what is not.

Building a Training Evaluation Strategy that Works

Corporate trainers, instructional designers, performance consultants and other L&D professionals routinely invoke Kirkpatrick’s Four-Level Training Evaluation Model as the go-to metric for measuring the effectiveness of particular training solutions. And yet, evidence shows that many CEOs are heavily suspicious of the results of their training programs.

For example, Jack J. Phillips and Patricia Pulliam asked 96 Fortune 500 CEOs about the success of their learning and development programs. Alarmingly, only 8% of the CEOs said they noticed an immediate business impact and 96% of CEOs expressed a desire to see a more explicit connection between training and ROI. The point here is not that Kirkpatrick’s time-tested evaluation model is an instructionally bankrupt methodology. Instead, this evidence suggests the model is not being used effectively.

One of the most integral dimensions of a training solution should be a thorough and insightful evaluation strategy, one that measures the success of our training by how it helps achieve business goals. In that spirit, I want to define each level of the model, then provide concrete examples of how you can approach each level in a way that truly communicates value.

Level 1: Reaction

The first level measures how your learners react to the training. Obviously, organizations want their employees to feel training was an engaging and relevant experience, especially as it relates to on-the-job responsibilities and expectations. L&D specialists conventionally design these evaluations as summative surveys that feature Likert-type questions as well as open-ended questions. Such questions could include the following:

- Were you engaged throughout the entire training?

- Did it speak to your needs?

- Were your mentors competent in executing the training?

- Would you recommend this training to new hires? Would this be useful to new hires?

- On a scale from 1 to 5, how confidence are you in [name of learning objective]?

However, this first level generally gets a bad rap. Senior executives often find participant feedback more-or-less irrelevant. And they’re right, especially when the questions and feedback are disconnected from the organization’s broader business goals. Yet gathering data on learners’ reactions can be a valuable asset for organizations, because it helps L&D professionals modify and improve the training for future learners, especially in terms of evolving business objectives.

The key takeaway here is that gauging learners’ reaction is a useful first step for evaluating the success of training programs. But it is only a first step, and L&D specialists must incorporate additional metrics in order to provide a more robust evaluation strategy.

Level 2: Learning

The second level measures the KSAs (knowledge, skills, attitude) the learner has acquired from the training. What do the learners know, do and feel now the training is over, and how do those KSAs relate to the key learning objectives? Tracking what and how much was learned is important, because it lets L&D specialists improve future training.

One effective approach to measure the second level is to use a diagnostic test as well as summative test that will demonstrate the differences between the learner’s knowledge before and after the training. Consider a web-based training curriculum we recently developed for a global marketing company in need of training employees to communicate a consistent brand message to clients.

(All examples have client names obscured.)

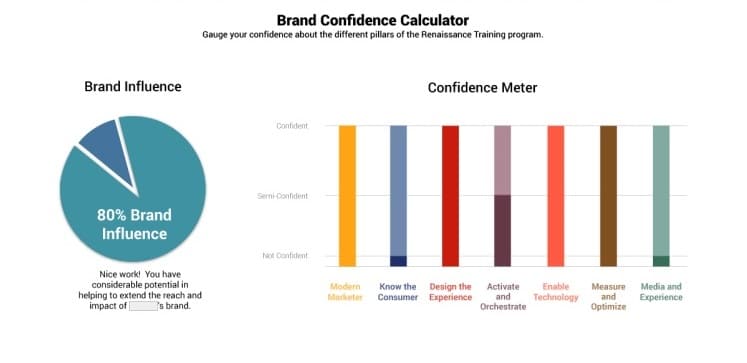

In this example, the first activity is a “Brand Confidence Calculator”, which asks learners to gauge their confidence concerning a set of training topics. Once learners complete the activity, a results page displays their degree of confidence for each of the key learning objectives. This diagnostic test personalizes the training as it prompts the learner to acknowledge some of training needs.

We also developed a summative activity that measures the learner’s mastery over key training topics. The results page juxtaposes their diagnostic test with the final test scores. Doing so helps the learner visualize and acknowledge the progress they made over the course of the learning lifecycle, from beginning to end. This evaluation strategy personalizes the learning experience and encourages learners to derive a sense of satisfaction in their learning development.

Level 3: Behavior

In the third level, L&D specialists will examine how the learner applies the knowledge, skills and attitudes toward on-the-job performance. One of the challenges in gauging this kind of metric is that these behavioral changes take time, and usually require observations from colleagues and managers.

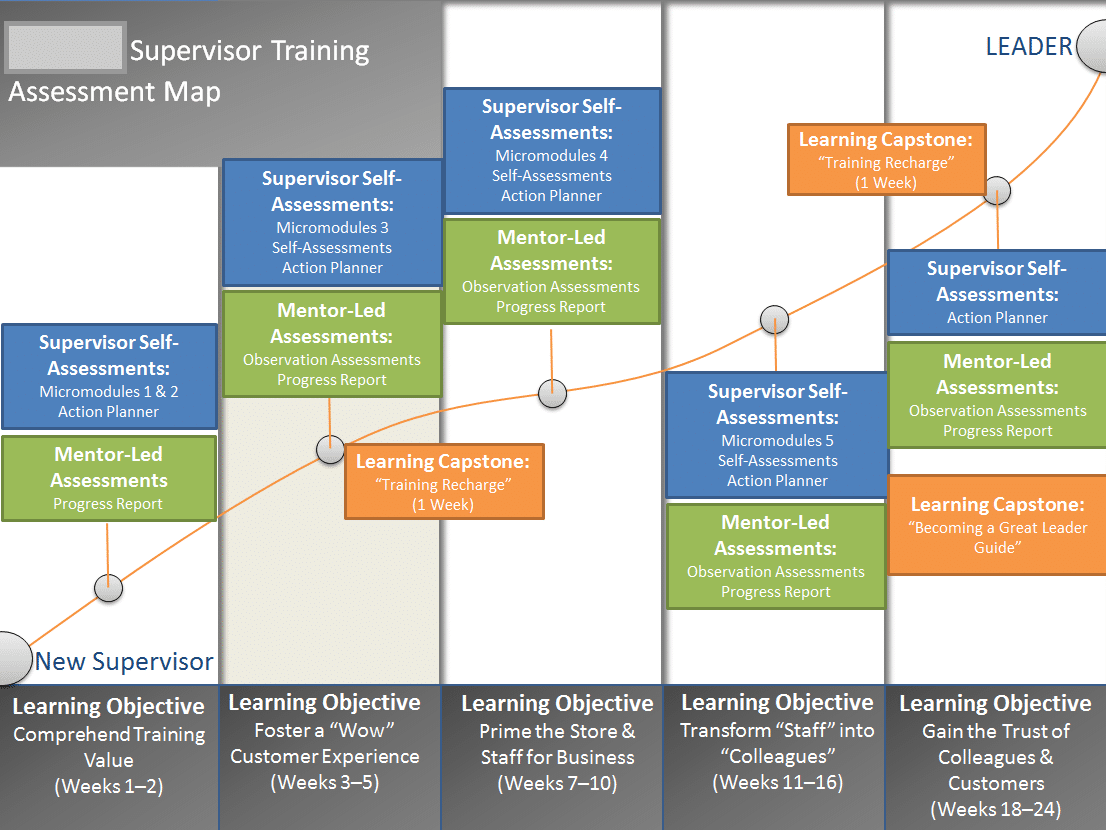

You can analyze changes in behavior by integrating a combination of formative assessments and management observations throughout the duration of the learning lifecycle. A recent project we developed for a national retailer targeted training new supervisors in basic leadership and technical skills.

First, managers completed weekly and monthly observation worksheets that gauged how well the learner had mastered the key learning objectives for that stage. Second, learners had to complete “Learning Capstones”. To prepare for the Capstones, the manager put the data from the mentor-led assessments and weekly observations into a set of talking-points and reinforcement activities. The Capstones ensured the learner was making measurable improvements. In the final month of training, managers assigned a set of “expert exercises” that required the learner to flex multiple aspects of their training. Managers then evaluated the learner’s success at completing the exercises and conduct a one-on-one discussion about performance.

This training strategy effectively gauges changes in behavior, because it (1) provides opportunities at various stages of the training for management to observe and track the learner’s performance and (2) assigns additional exercises that reinforce key training objectives. Ultimately, to measure behavioral changes effectively, L&D specialists must design an assessment strategy that occurs over time and throughout the learning lifecycle, often with manager-driven observational assessments.

Level 4: Results

The final level measures the extent to which the training achieved key business goals, such as reducing overhead costs, increasing sales, improving customer service calls, decreasing compliance violations and so forth. As L&D specialists, it’s helpful to define training results not in terms of ROI (Return on Investment) but in terms of ROE (Return on Expectations), which should be clearly defined at the outset of the training.

For example, we recently developed a performance support application that helped a manufacturing company reduce the downtime of its equipment.

The client’s expectations involved training employees to regularly use the performance support application in order to optimize how quickly they repair dysfunctional equipment. To prove the application would meet expectations, we incorporated a reporting feature that provided management with dashboards and data visualizations displaying some of the following: the number of employees who are using the application, how often they use it, a breakdown of usage between and across facilities, leaderboards for gamified exercises, as well as the most frequently visited pages in the reference library.

Alarmingly, only 8% of the CEOs said they noticed an immediate business impact.

Rather than being constrained by the data reporting issues associated with many LMS and SCORM capabilities, what this provided was a data-driven design to help senior management monitor and benchmark how well the training solution is being used to resolve actual problems facing a work environment. By turning these datasets into easily accessed insights about how learners are making use of the training solution on a granular and company-wide level, clients can more concretely measure ROE.

Ultimately, Kirkpatrick’s Four Level Evaluation Model remains a useful methodology for gauging the effectiveness of particular training programs. The basis of a strong training solution is an agile evaluation strategy to take stock of the impact of learning and development courses. If training is successful, it’s when L&D specialists integrate a comprehensive evaluation strategy into the curriculum at the very beginning stages of ideation and design.